Metaphor

https://metaphor.systems/Individual marks:

|

Help users practice new ways of imagining and formulating queries. Research suggests that effective prompting of LLMs can be challenging (Zamfirescu-Pereira et al., 2023) and query formulation even in mainstream web search engines is complicated (Tripodi, 2018). Mollick (2023), not specifically re search systems, has called for “large-scale public libraries of prompts”. |

||||

|---|---|---|---|---|

|

Comment: It may be interesting to also consider whether these queries demonstrate an advantage of the generative search capabilities or not. |

||||

|

No

|

||||

|

The phrase "searchable repository of examples" comes from Zamfirescu-Pereira et al., 2023. They point to a "prompt book" for DALL·E. Other examples, also in the image generation domain, include Lexica (marketing itself as "The Stable Diffusion search engine"). See also the searchable LangChain Hub prompt repository for developers. |

Partial

`# show-and-tell` channel in the Discord channel: 'Show off what you've found with Metaphor!' |

|||

|

Support users in sharing their search experience with others. Research demonstrates significant value in users communicating about their experiences with tools. Better support for sharing interactions with these systems may improve users collective ability to effectively question/complain, teach, & organize about/around/against these tools (i.e. “working around platform errors and limitations” & “virtual assembly work” (Burrell et al., 2019), “repairing searching” (Griffin, 2022), search quality complaints (Griffin & Lurie, 2022), and end-user audits (Metaxa et al., 2021; Lam et al., 2022)) and improve our “practical knowledge” of the systems (Cotter, 2022). |

||||

|

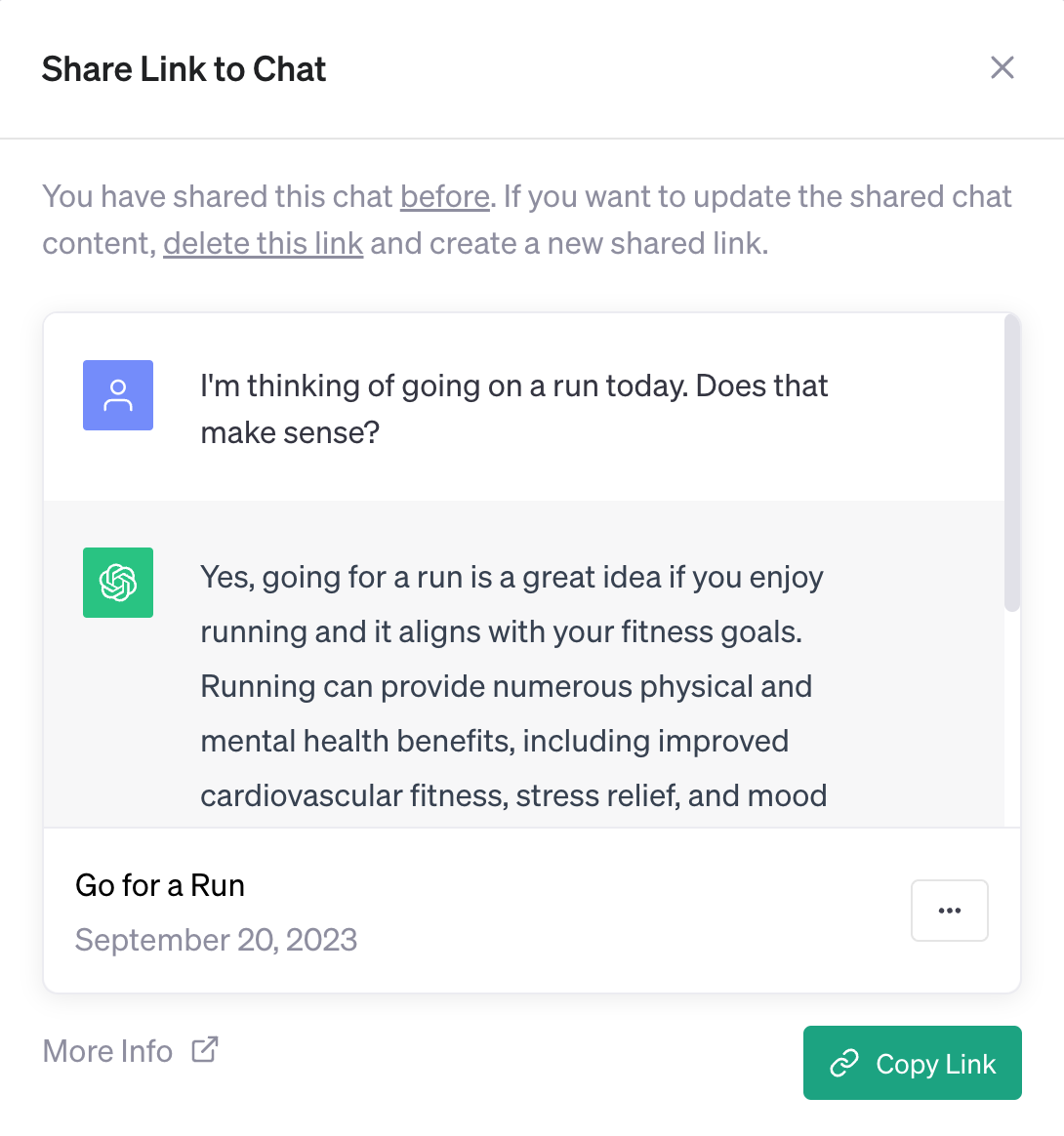

Is there a share interface? For example, this is supported on OpenAI's ChatGPT and Google's Bard. It is not supported on Anthropic's Claude. |

No

|

|||

|

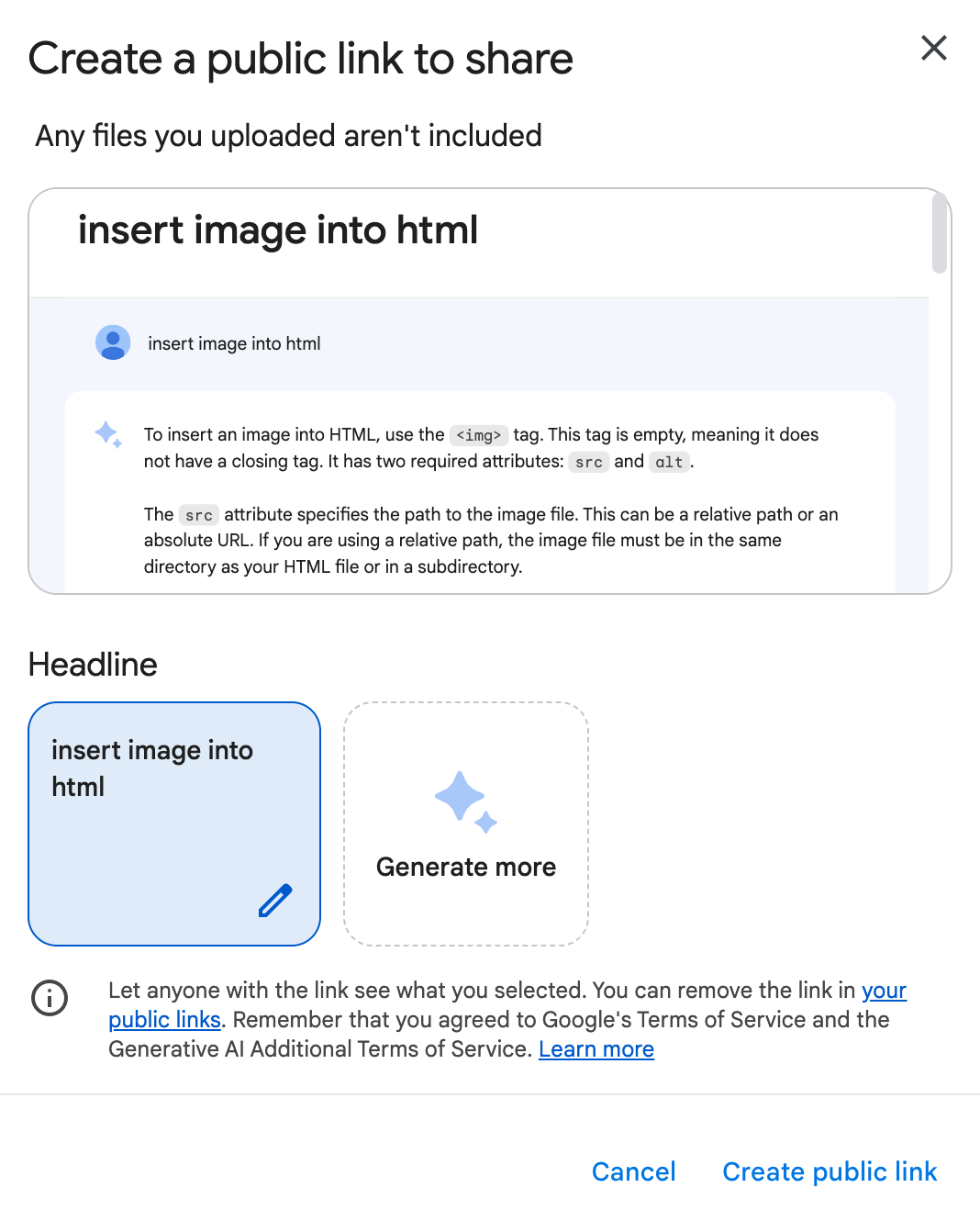

Is there an explanation of how the share interface works provided or linked to in the interface itself?

Examples: (1) The share interface in OpenAI's ChatGPT has a "More Info " link to their FAQ page: ChatGPT Shared Links FAQ:  (2) The share interface in Google's Bard has a "Learn more" link to a Bard Help page: Share your Bard chats:

|

N/A

|

|||

|

Example: Here is the interaction in ChatGPT, per ChatGPT Shared Links FAQ: If I continue the conversation after I create a shared link, will the rest of my conversation appear in the shared link? |

N/A

(1) Links to searches reproduce the search from the URL. |

|||

|

Quality of share cards may influence the engagement on social platforms. |

Links to searches produce a social share card. |

|||

|

Are share links (or links to searches) indexed and searchable in search engines (or the system itself)?

Permitting share links to be indexed by search engines may allow searchers elsewhere to find and evaluate the quality of the system responses. Depending on the disclosure in the interface this may pose a privacy risk to users. It may be viewed explicitly as a growth strategy by a search system. Evaluation details: I am currently looking only at `site:` searches on Google. See a links to conversations about this on Twitter here. |

You can find links to searches in Google: g[site:https://metaphor.systems/search]. |

|||

|

Support interoperability and extensibility.

|

||||

|

Searching by URL has long been a staple approach to searching. This allows people to link to (live) searches (to bookmark or share with others) and to integrate searching within simple scripts. This also supports auditing. |

Yes

Example: What is an LLM? |

|||

|

Searching by API may better support evaluations of the search results and the development of modifications or extensions building on or with the search system. We do not currently evaluate the performance of the API or restrictions on access. You.com, for instance, says this: If you are interested in being an early access partner please email api@you.com with your use case, background, and expected daily load.Other search engines that provide some form of API include the Brave Search API, Google (through their Programmable Search Engine's Custom Search JSON API), Microsoft's Bing Web Search API, and the Yandex Search API. You can also get programmatic or tool-based access to web search results from many third party providers that scrape search results from search engines. For example, academic researchers have conducted research using data from SerpAPI (Zade et al., 2022 ) and Ahrefs (Williams & Carley, 2023). |

Yes

Metaphor announced beta access to their search API on Apr 21, 2023. It is now generally available at platform.metaphor.systems |

|||

|

Providing open source models can advance the general search landscape as well as help users better understand the capabilities and limitations of the search system. |

No

|

|||

|

Help users develop, submit, and share feedback. Mechanisms to support feedback will shape user expectations, experiences, and future improvements of the search landscape. Some approaches may excessively conceal or otherwise control complaint rather than focusing on the users. |

||||

|

This is distinct from having a Contact or Feedback link buried off-page or in a footer. |

No

|

|||

|

Not yet evaluated

|

||||

|

Not yet evaluated

|

||||

|

Sharing feedback from users in a transparent and privacy-preserving manner may improve the larger search landscape. Something like commons-based infrastructures, interfaces, or record repositories already exist for other factors, like IndexNow, Lumen, the ClaimReview schema, robots.txt, the OpenSearch protocol, research datasets and benchmarks, etc. These are resources that search systems may contribute to, respect, or draw on. Research shows that search systems already rely heavily on Wikipedia (see especially, McMahon et al. (2017 )). Note: The proximate cause of adding this criteria was the responses from the CEOs of Perplexity AI and You.com to a Oct 15, 2023 comment from Yann LeCun (Chief AI Scientist at Meta): Human feedback for open source LLMs needs to be crowd-sourced, Wikipedia style. |

No

|

|||